Scaling Serendipity

A S.M.A.R.T Framework for AI-Augmented Innovation

HCI Institute · Carnegie Mellon University

Abstract

Despite record investments in R&D, scientific and technological breakthroughs are becoming less frequent, and ideas are getting harder to find.

One of the most pervasive barriers is cognitive fixation: as experts become increasingly specialized, this can paradoxically narrow creative vision and make it difficult to look beyond low-hanging fruit. Even when teams manage to identify a promising inspiration, transferring its underlying principles to developing concepts in a new context presents a second major obstacle. Finally, bold ideas frequently die in the so-called "fuzzy front end" of R&D because they are not systematically de-risked.

History consistently shows that the most transformative innovations emerge not from deeper digging within a single field, but from unexpected connections across domains:

NASA engineers turned to origami principles to fit a massive solar array into a narrow rocket.

The streamlined beak of a kingfisher inspired the design that eliminated sonic booms from high-speed trains.

A car mechanic adapted a YouTube party trick for removing wine corks to create the Odón device for difficult childbirths.

Despite its power, analogical reasoning remains one of the most underutilized tools in innovation. Because the process is cognitively demanding and highly sensitive to fixation, it too often depends on chance—emerging from rare moments of serendipity rather than systematic discovery.

This white paper introduces SMART—Search,Map, Adapt,Refine, and Test—a human-AI collaborative framework that turns analogical discovery from serendipity into a systematic, end-to-end process.

The impact of our framework has been validated in peer-reviewed research, enterprise collaborations, and global innovation challenges, including multiple awards in top-tier HCI and machine learning venues (CHI, CSCW, KDD) and publications in top journals such as Proceedings of the National Academy of Sciences.

Search at Scale

Experts face a fundamental challenge in searching for analogical solutions from distant fields: the same expertise that fuels insight can trap them in familiar approaches. Existing computational approaches face similar challenges: keyword or embedding-based search methods rely on surface co-occurrences, while analogies require looking beyond to deep structural similarities, and LLM-based approaches suffer from mode collapse resulting in homogeneous ideas over time.

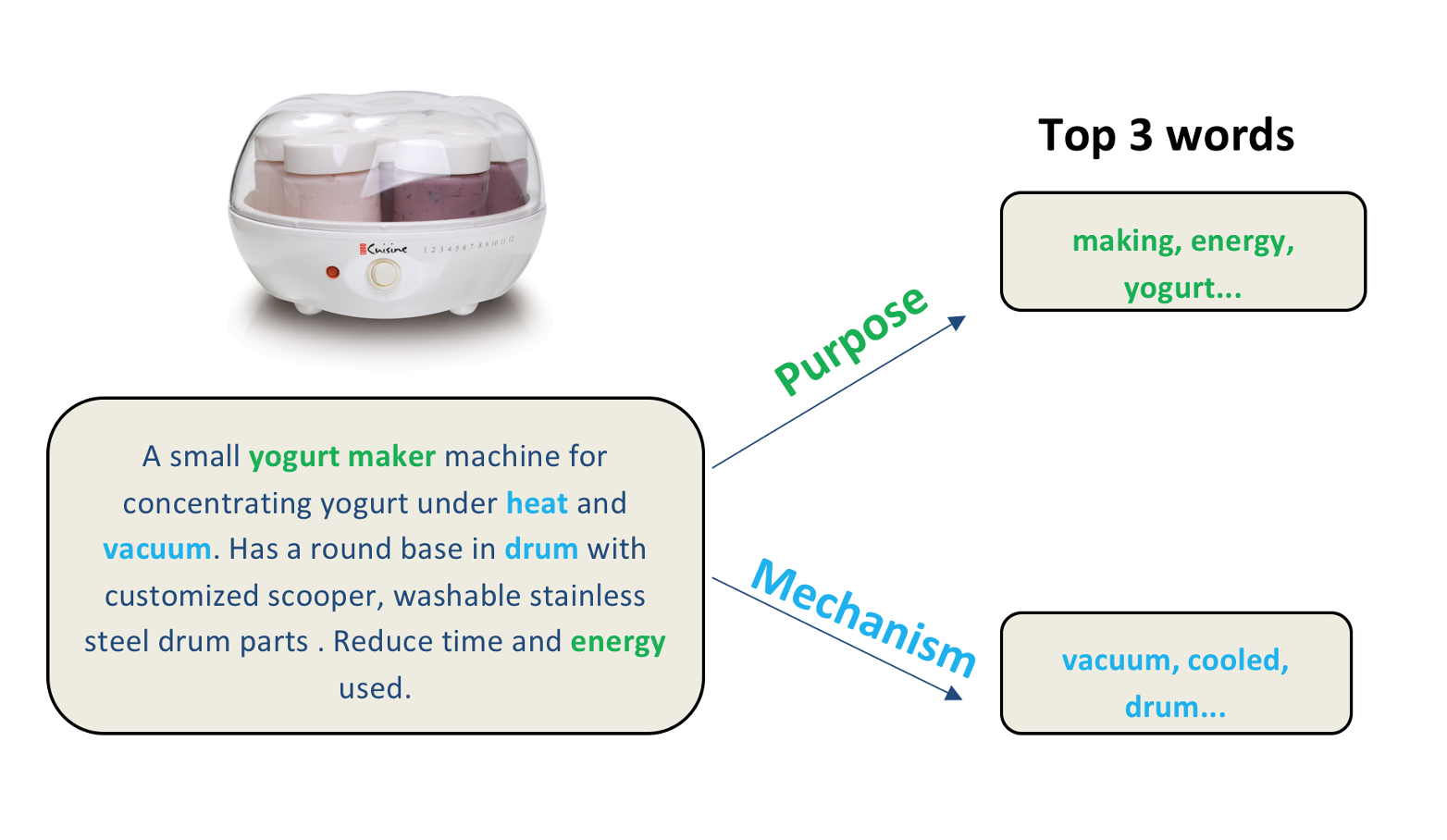

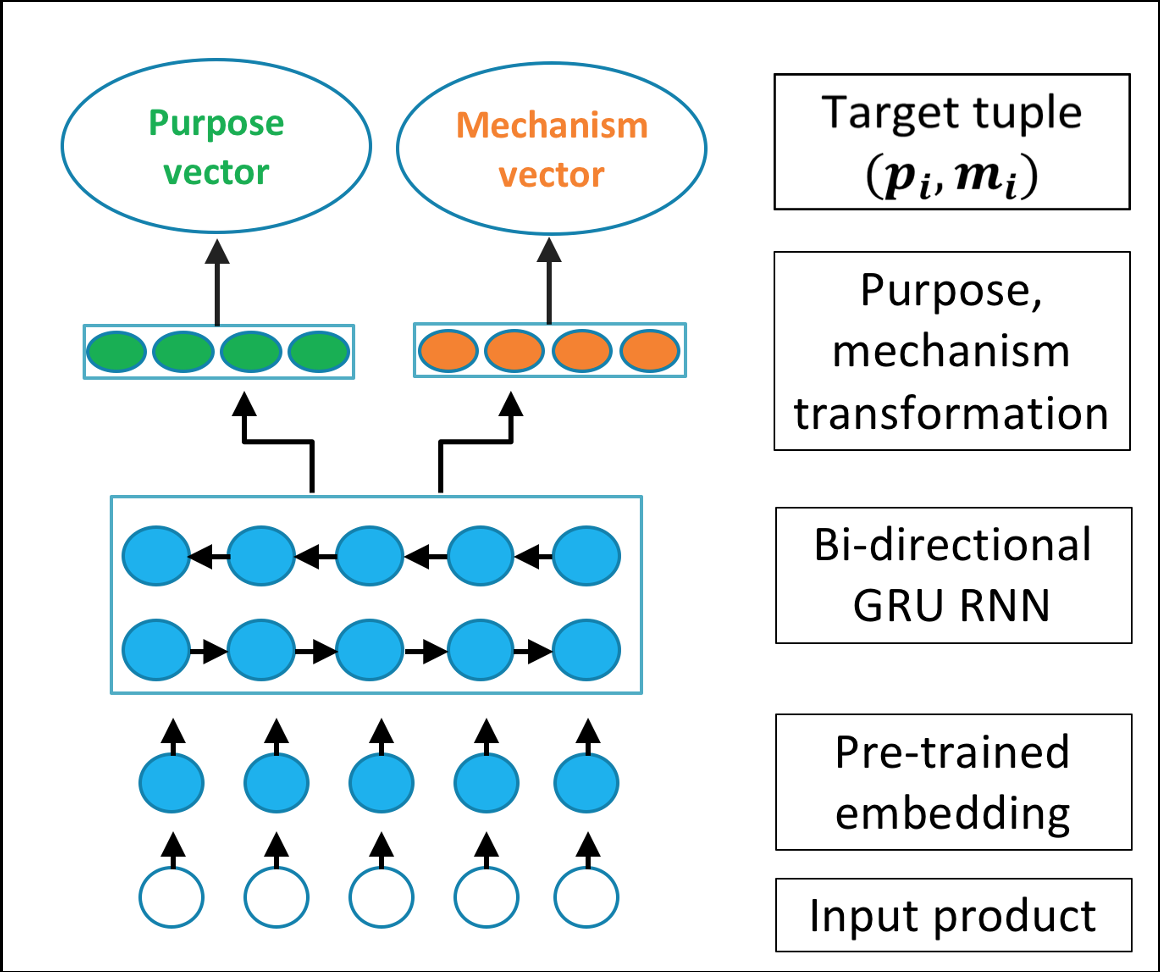

We introduced a new way of learning analogous structure at scale through purpose–mechanism schemas: learning different vector embeddings for the purpose a system is trying to achieve versus the mechanism it uses to achieve that purpose.

A yogurt maker's purpose is to make yogurt while its mechanism involves using a vacuum cooled drum. Looking for other ideas with similar purposes but different mechanisms results in analogous inspirations like sharkskin microgrooves for "reducing friction without lubricants."

In controlled studies, this method doubled the number of high-quality ideas generated, and can unlock the value of millions of unstructured ideas functionally relevant to a target problem.

Map to Target Domain

Simply finding a novel analogy is not enough; R&D teams often struggle to "map" the abstract concept to their specific, real-world problem. This cognitive gap is where most analogical innovation fails.

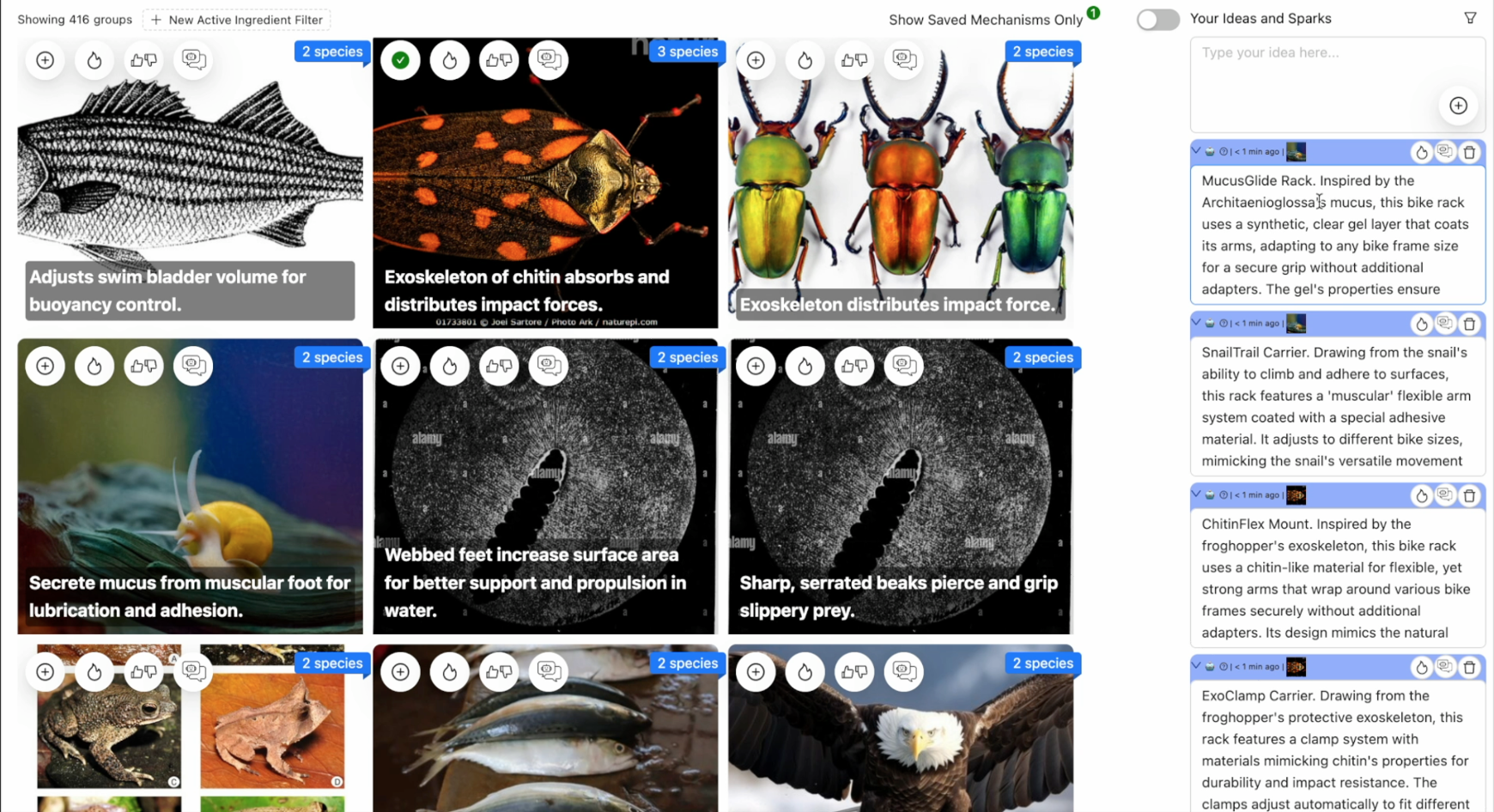

We developed BioSpark, a system that computationally bridges this gap. It not only finds inspirations but automatically transfers them to the target domain, generating specific application scenarios and suggesting concrete, manufacturable materials.

A designer working on a bike rack struggles with "snail mucus" as inspiration. BioSpark translates this into: how the snail's adaptive adhesion could become a hydrogel-based clamp for varied bike frames, including specific hydrogels that remain pliable in winter conditions.

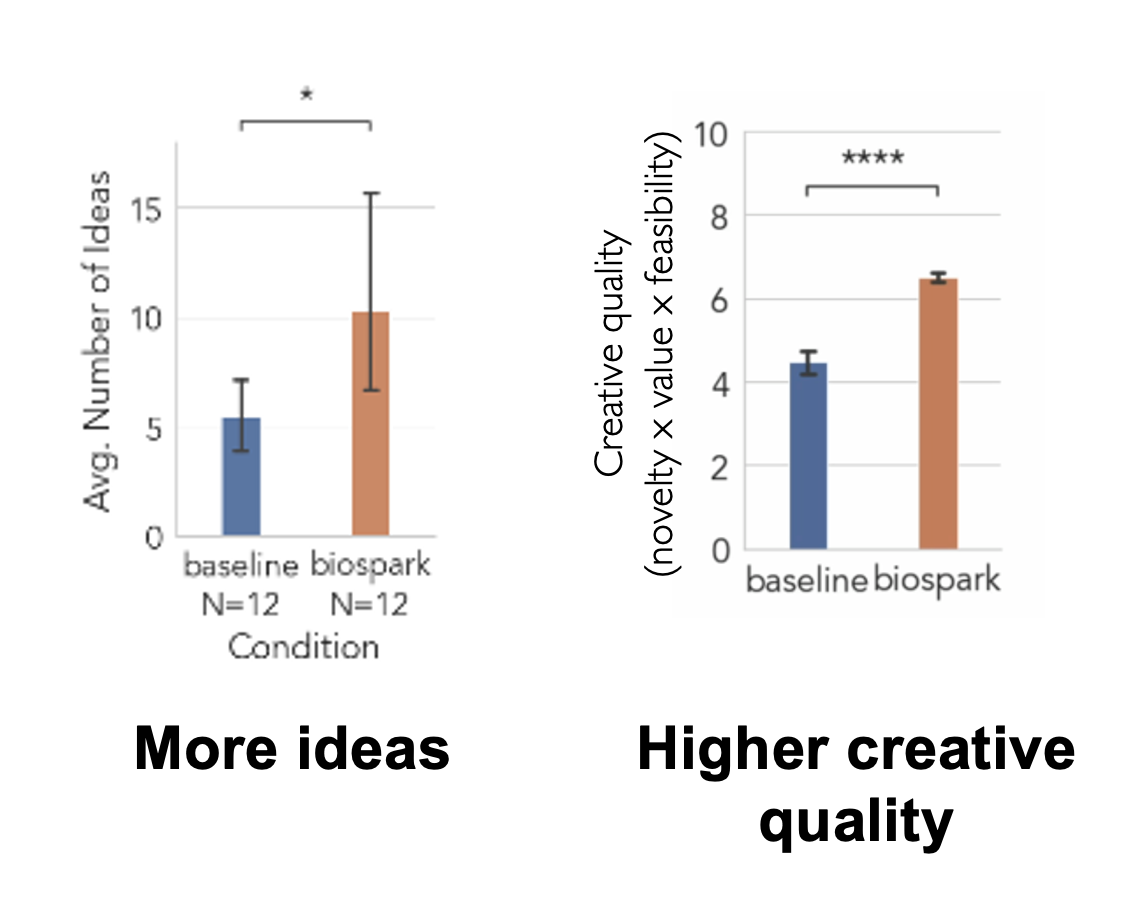

In studies, designers using this system explored a wider design space and produced significantly more creative ideas than those using standard generative AI.

Adapt with Human Expertise

Breakthroughs rarely come from adopting an external idea wholesale; they come from experts adapting an idea's core principle using their deep domain knowledge. The Wright brothers, for instance, adapted the principle of shear from a cardboard box, not the material itself.

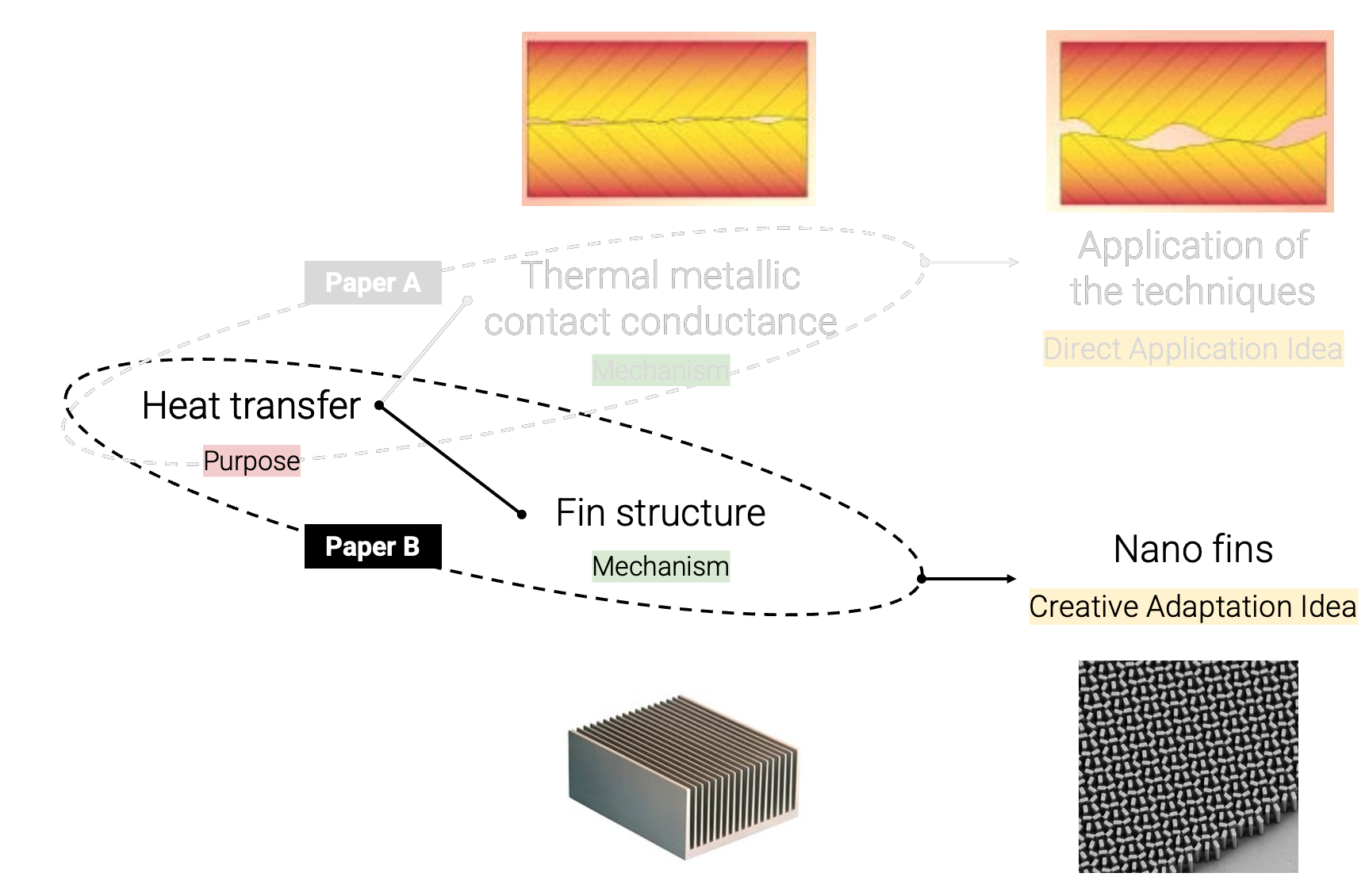

Our research focuses on computationally facilitating this expert adaptation. We found that feeding experts targeted analogical ideas (e.g., a "fin structure") prompts creative leaps (e.g., to "nanoscale fins" for chip cooling).

We also applied this in collaborative settings with organizations like Conservation X Labs, developing algorithms to match teams from diverse domains that share a deep structural problem. After refining our matching algorithm to find the "sweet spot" of cognitive distance, teams showed significant improvements in idea novelty and usefulness.

Refine and Iterate

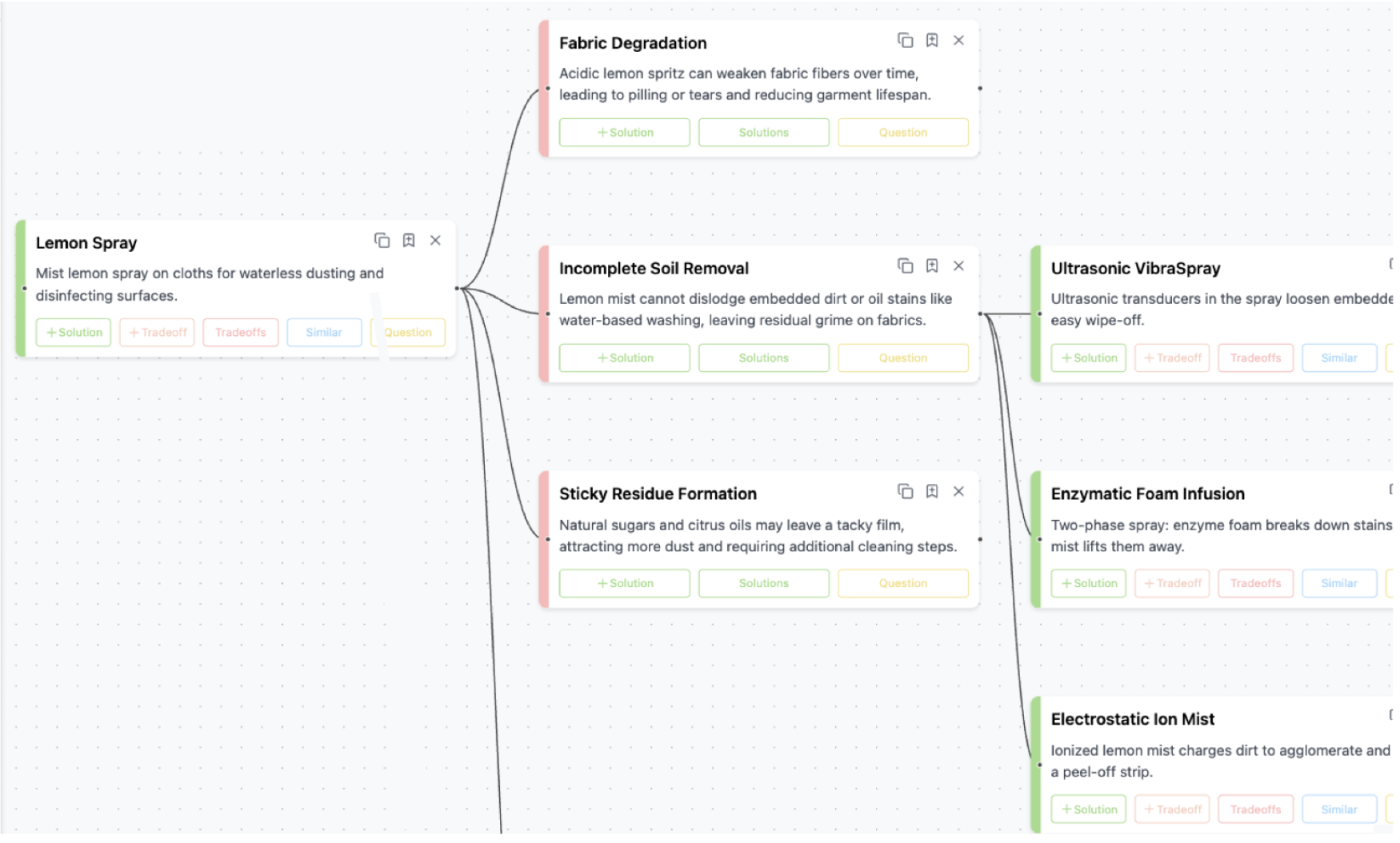

Innovation is not a single "lightning strike" but a complex, branching process of refinement. Dyson's vacuum, for example, required 5,000 iterations to solve the cascade of sub-problems. Standard AI tools, with their linear, conversational interfaces, are fundamentally mismatched to this non-linear exploration, causing users to abandon paths prematurely.

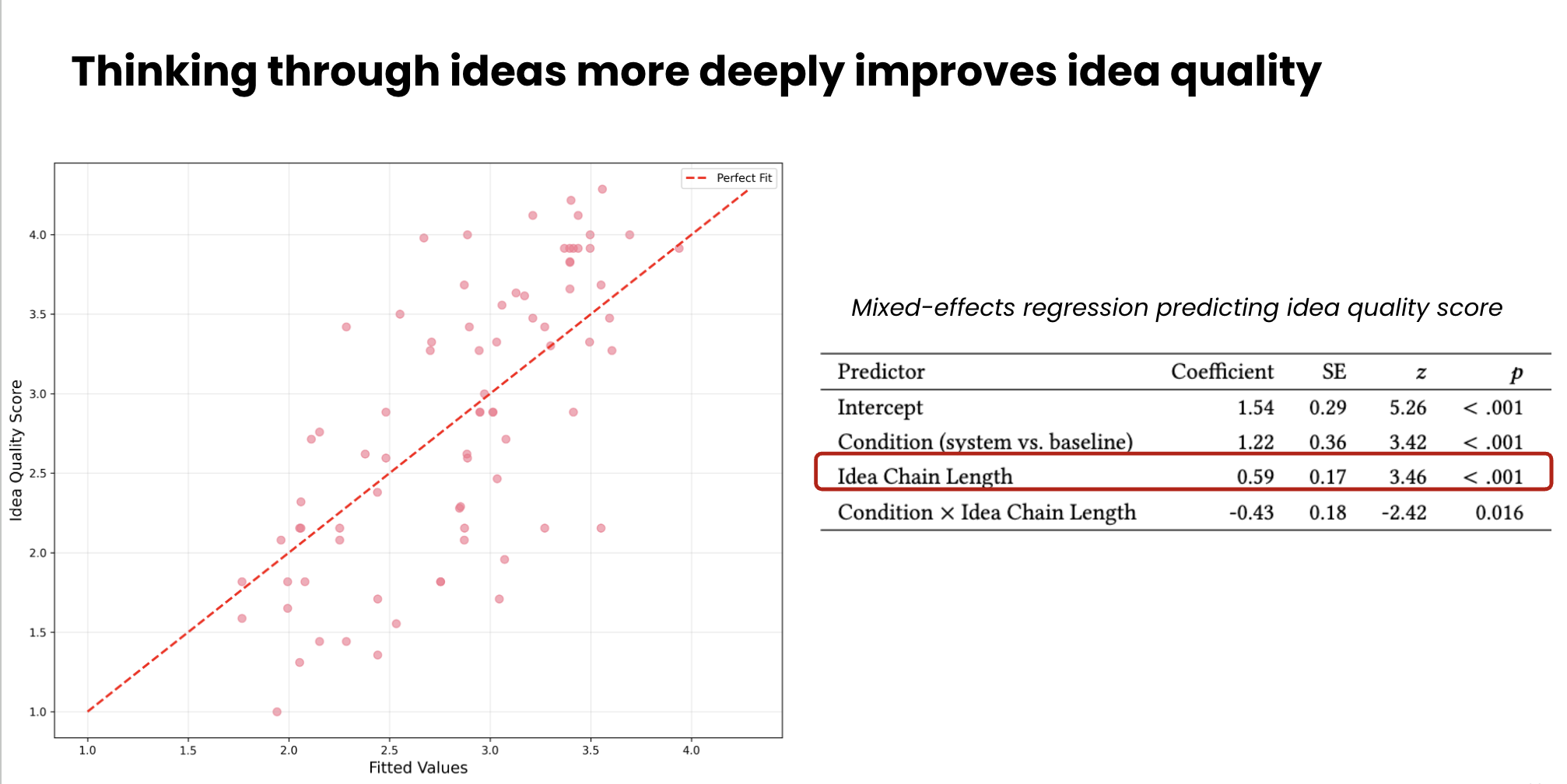

We developed Flexmind, a system that provides a non-linear canvas where an AI partner actively helps the user explore and solve emergent sub-problems. This "tool for thought" mirrors the branching nature of R&D.

We found that users of Flexmind explored many more solutions in greater depth, and statistical analysis confirmed that this deeper exploration leads to higher-quality ideas, as rated by senior R&D leaders.

Test Viability

Turning inspiration into impact requires more than imagination—it demands evidence that an idea can work and a path to prove it. Our latest work-in-progress, Inspyral, extends this capability by mapping early ideas to analogous solutions across domains to help teams evaluate viability, identifying potential collaborators and implementation partners, and estimating technology readiness and potential impact.

When exploring novel flossing technologies, Inspyral surfaces analogs in soft-robotic cleaning systems that use magnetic actuation to navigate confined spaces—offering concrete cues about materials, control strategies, and feasible validation steps. It connects these insights to potential partners and next-step experiments.

Building Your Innovation Pipeline

Our research has established a computational framework for making innovation a scalable, evidence-driven process. We are now operationalizing this work into an end-to-end, human-AI collaborative platform that accelerates the full journey from idea to impact.

This system unites systematic Search, guided Mapping, expert Adaptation, iterative Refinement, and rapid Testing into a cohesive ecosystem. At its core, the platform is designed to augment—not replace—human expertise, while leveraging AI in a way that avoids the risk of linear thinking and homogeneity.

We are now inviting forward-looking R&D partners to collaborate on pilot deployments, helping shape the next generation of innovation systems.

Collaborative Study with R&D Teams

To tailor this platform to your specific needs, one way of engaging may include a "white glove" co-design partnership. Our proposed collaboration follows a structured, multi-phase approach:

Discovery & Scoping

Deep-dive discussions to map your innovation pipeline and identify a high-impact R&D problem with clear success metrics.

Co-Design & Iteration

Work directly alongside your experts as co-design partners, deploying our engine and rapidly iterating based on real-time feedback.

Pilot & Validation

A structured pilot study on real R&D tasks, followed by month-long deployment to assess sustained impact and long-term value.

References

- Scaling up analogical innovation with crowds and AIProceedings of the National Academy of Sciences 116, no. 6 (2019): 1870-1877.

- Accelerating innovation through analogy mining Best Paper AwardIn Proceedings of the 23rd ACM SIGKDD international conference on knowledge discovery and data mining, pp. 235-243. 2017.

- Solvent: A mixed initiative system for finding analogies between research papersProceedings of the ACM on Human-Computer Interaction 2, no. CSCW (2018): 1-21.

- Scaling creative inspiration with fine-grained functional aspects of ideasIn Proceedings of the 2022 CHI Conference on Human Factors in Computing Systems, pp. 1-15. 2022.

- Augmenting scientific creativity with an analogical search engineACM Transactions on Computer-Human Interaction 29, no. 6 (2022): 1-36.

- Analogy mining for specific design needsIn Proceedings of the 2018 CHI conference on human factors in computing systems, pp. 1-11. 2018.

- BioSpark: Beyond Analogical Inspiration to LLM-augmented Transfer Honorable Mention AwardIn Proceedings of the 2025 CHI Conference on Human Factors in Computing Systems, pp. 1-29. 2025.

- Inkspire: supporting design exploration with generative ai through analogical sketchingIn Proceedings of the 2025 CHI Conference on Human Factors in Computing Systems, pp. 1-18. 2025.

- FlexMind: Supporting Deeper Creative Thinking with LLMsarXiv preprint arXiv:2509.21685 (2025).